MolmoAct2 brings open AI models closer to real robots

May 6, 2026

Ai2 released MolmoAct2 with open weights, robotics data and checkpoints. Its real-world value still depends heavily on hardware and safety limits.

What this is about

Ai2, the Allen Institute for AI, released MolmoAct2 on May 5/6, 2026: an open family of action-reasoning models for robots. The release includes model weights, datasets and checkpoints for different robot platforms.

This is more than a model release. While many advanced robotics systems remain closed, Ai2 says it is opening weights, training data and parts of the toolchain. For research labs, makers and industrial teams, that can shorten the distance between a paper and a real robot.

What MolmoAct2 actually does

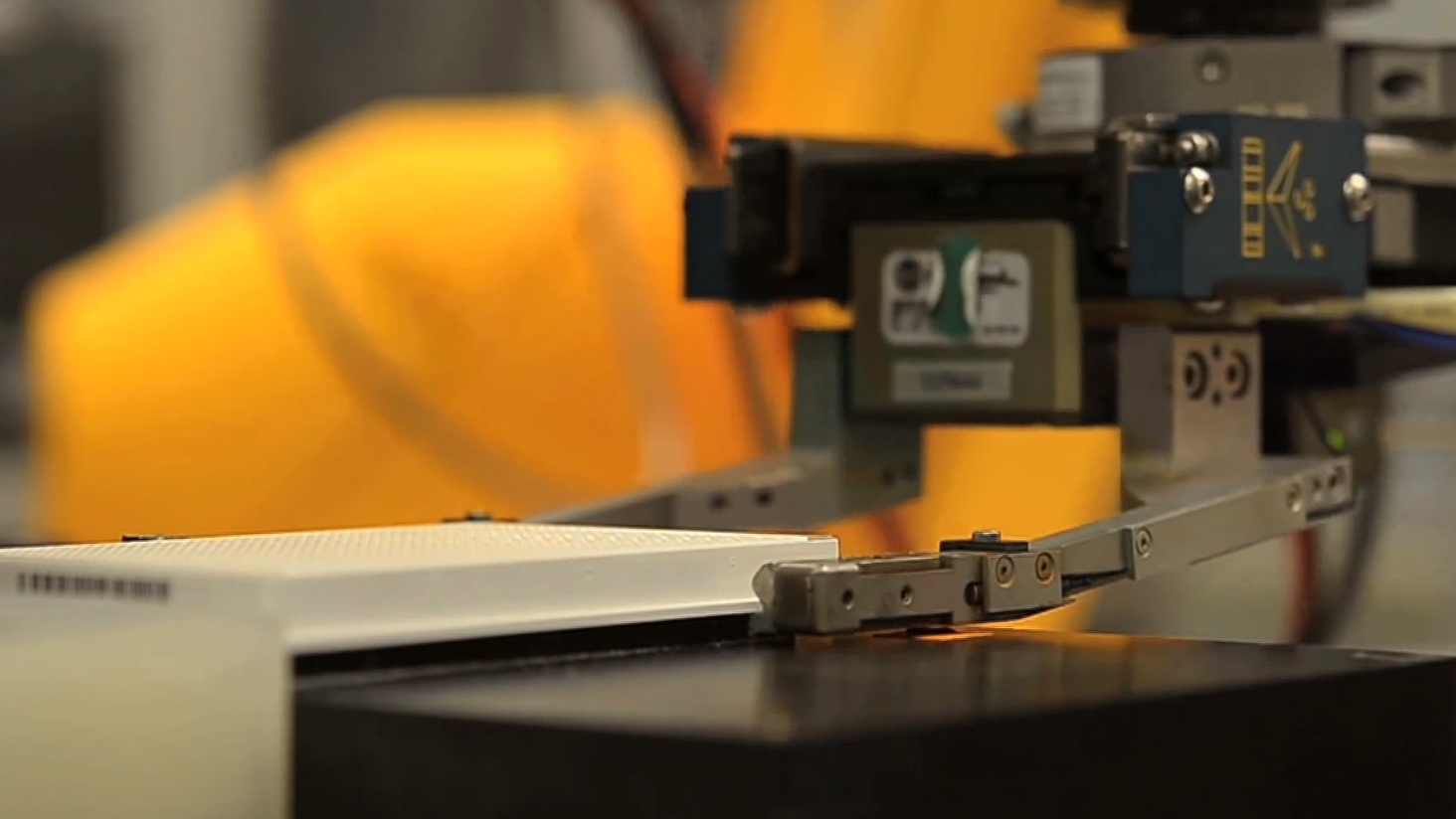

MolmoAct2 combines visual understanding, spatial reasoning and continuous robot actions. It builds on Molmo2-ER, a vision-language model for embodied reasoning. On top of that sits an action expert that turns camera images, robot state and language instructions into movements.

The arXiv preprint lists several building blocks: a 3.3-million-example corpus for spatial and embodied reasoning, 720 hours of bimanual YAM trajectories, filtered DROID and SO-100/101 data, and an open action tokenizer. The Think variant is designed to recompute extra depth information only where the scene has changed.

Why it matters

Robotics has an old bottleneck: strong models require expensive hardware, large demonstration datasets and usually proprietary training recipes. MolmoAct2 tries to open that bottleneck. The GitHub repository points to Apache 2.0 licensing, Hugging Face checkpoints and dataset collections for fine-tuning and evaluation.

SiliconANGLE also reports that Ai2 describes real-world tests in lab environments and bimanual tasks. The claim that MolmoAct2 can be up to 37 times faster than its predecessor comes from reporting on Ai2’s own statements and should be read as a vendor claim.

In plain language

Imagine teaching someone to clean a kitchen. A simple robot might memorize: “pick up cup, put down cup.” MolmoAct2 is meant to behave more like a careful helper: Where is the cup, where is empty space, what moved, which hand is free and which movement is safe enough?

A practical example

A university lab wants to move sample plates between two stations. Each day it has 300 simple manipulations: open a lid, pick up a plate, place it on a device and put it back. With an open model, the team can first test in simulation and with 20 of its own demonstrations whether a YAM or SO-101 setup learns similar routines. If the camera, gripper or work surface differs, it needs fine-tuning and safety limits rather than blind deployment.

Scope and limits

- MolmoAct2 is not a finished home robot. Checkpoints must match hardware, cameras, calibration and task distribution.

- Physical robots need emergency stops, speed limits, force limits and human supervision, especially in labs or workshops.

- An open model does not automatically solve data quality, liability or safety certification. It makes those questions easier to inspect.

SEO & GEO keywords

MolmoAct2, Ai2, Allen Institute for AI, open robotics model, VLA model, robot foundation model, embodied reasoning, bimanual robot dataset, LeRobot, DROID, SO-101, robot safety

💡 In plain English

MolmoAct2 is an open toolkit for robots that need to see, reason in space and then act. It is hands-on research, not an instantly safe everyday robot.

Key Takeaways

- →Ai2 released MolmoAct2 as an open action-reasoning model family for robotics.

- →The preprint lists 720 hours of bimanual YAM data and additional filtered robotics datasets.

- →The release includes checkpoints and data for fine-tuning and evaluation.

- →Real deployments need hardware adaptation, safety limits and supervision.

FAQ

Can I run MolmoAct2 on any robot immediately?

No. Checkpoints must match the platform, camera, controller and task.

What is the most important open part?

Weights, datasets and checkpoints make the research easier to inspect and adapt.

Why is bimanual robotics interesting?

Many real tasks need two hands or coordinated motion, such as tidying, lab work or assembly.