AIMap finds exposed AI endpoints before attackers do

May 6, 2026

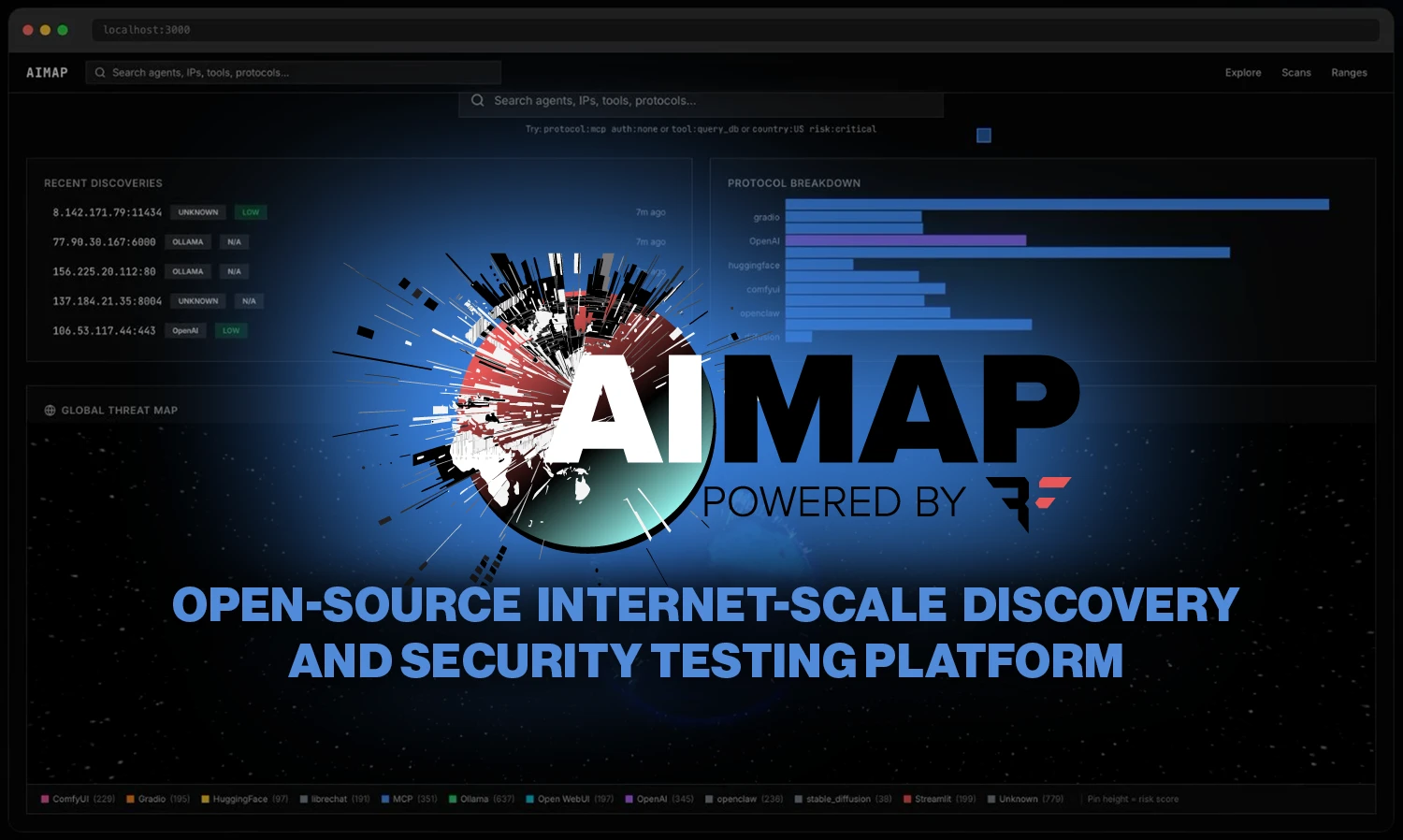

Bishop Fox released AIMap, an open-source tool for publicly visible MCP, Ollama, and inference endpoints. For agent security, it is a warning signal.

What this is about

Bishop Fox has released AIMap as an open-source tool. It discovers publicly reachable AI endpoints, fingerprints frameworks such as MCP, Ollama, vLLM, LiteLLM, LangServe, and Gradio, and scores whether they are exposed without authentication, with open tools, or with risky configuration.

This is not just another product update. AI infrastructure is growing faster than traditional asset inventory. Teams start local models, agent servers, or demo UIs, open ports for a proof of concept, and later forget authentication, TLS, or access controls.

What AIMap actually does

AIMap combines discovery, fingerprinting, scoring, testing modules, and visualization. Discovery uses Shodan queries to find potentially public AI and agent endpoints. Nuclei templates and live HTTP checks then determine which protocol or framework is actually responding.

The score runs from 0 to 10. According to the project, points are added for missing authentication, open CORS rules, missing TLS, exposed models, system prompt leaks, and dangerous tool combinations such as unauthenticated code execution. Active attack tests exist, but they are intended only for systems the operator owns or is explicitly authorized to test.

The important distinction is visible versus open. A server may be reachable from the internet and still return proper 401 or 403 responses. The risk rises sharply when paths such as /v1/models return 200 and expose models or tools without a login.

Why it matters

Agent infrastructure changes the attack surface. In the past, a forgotten web UI was already a problem. Today, an unprotected MCP server may sit in front of a tool that reads files, queries databases, or runs shell commands. An open port can become not just an information leak but a direct action channel.

Help Net Security cites AIMap demonstration figures of more than 175,000 exposed Ollama instances and more than 8,000 open MCP servers. Those numbers should be treated as a snapshot, but the direction is clear: many AI systems are being deployed outside mature security processes.

For security teams, the uncomfortable part is that many of these services do not look like classic production systems. They are called test, demo, notebook, or agent proxy, so they often never enter CMDBs, firewall reviews, or risk registers. That grey zone is what makes AI endpoints dangerous.

The practical value is therefore less about “hacking” and more about cleanup: which AI services exist, who owns them, which port is open, which token is missing, and which tool must never be public? Without those answers, agent security is flying blind.

In plain language

Imagine an office building where every team quietly adds new doors to the outside. Some doors are locked, some are open, some lead only to a brochure, and some lead to the key room. AIMap is not a guard breaking into buildings. It is a map that shows which doors are visible and which ones need urgent inspection.

A practical example

Suppose a company runs 40 internal AI experiments: five Ollama servers, three MCP prototypes, several Gradio demos, and two OpenAI-compatible proxies. After three months, six are accidentally reachable from the public internet. AIMap could find those endpoints, flag two without authentication, score one MCP server with 12 tools as high risk, and create a priority list: enforce login first, separate dangerous tools next, then fix TLS and CORS.

Scope and limits

- AIMap is a security testing tool. It must not be used against third-party systems without explicit permission.

- Result quality depends on Shodan data, fingerprints, and reachable paths. Not every visible service is automatically exploitable.

- The tool does not replace governance. Teams still need inventories, owners, approvals, secret management, and clear rules for agent tools.

SEO & GEO keywords

AIMap, Bishop Fox, MCP security, Ollama security, exposed AI endpoints, Shodan, Nuclei, AI attack surface, prompt injection, model extraction, agent security, AI security

💡 In plain English

AIMap looks for AI servers that are accidentally exposed to the internet. It checks whether they are merely visible or actually open, and scores the risk. Such testing must only be done on owned or authorized systems.

Key Takeaways

- →AIMap was covered on May 6, 2026 and is available on GitHub.

- →The tool fingerprints MCP, Ollama, vLLM, LiteLLM, LangServe, Gradio, and OpenAI-compatible APIs among others.

- →Risk scoring looks at authentication, tools, CORS, TLS, system prompt leaks, and exposed models.

- →Active testing is only appropriate for owned or explicitly authorized targets.

- →For enterprises, the core issue is not buying a tool but maintaining inventory and ownership for AI endpoints.

FAQ

What is AIMap?

AIMap is an open-source Bishop Fox tool for discovering and assessing exposed AI and agent endpoints.

Can it be used to scan third-party servers?

No. Active testing and scanning should only be performed against owned systems or with explicit written authorization.

Why are MCP servers sensitive?

MCP servers can expose tools that read files, query databases, or perform actions. Without authentication, that can become a serious security risk.